Imagine being able to view a whole landscape from any angle and from any height. Then imagine being able to move a virtual sun into any position, moving it at will to low grazing angles to enhance subtle features on the ground. We have discovered that a combination of Polynomial Texture Mapping, LiDAR, and 3D software enable us to do just that.

Polynomial Texture Mapping (PTM) is a technique which allows a photograph (normally of an object) to be interactively relit; the way it is illuminated is not fixed, and the viewer can change the lighting angle and intensity. PTM models are created by taking a series of photographs, each one illuminated from a different direction. Using software, the photos are then combined into a mathematical representation of the subject. Software viewers turn this data back into a photograph which you can light from any direction.

Surfaces and Light

I have worked with 3D surfaces in archaeology (including the 2002/3 Stonehenge laser scan project, and 3D visualisations of the Stonehenge World Heritage Site LiDAR) for some time, and know well how crucial light is to aid our perception of the detail on a surface. One of the first animations that I produced from the Stonehenge stone 53 scan data was of a light circling around the surface at a low angle to reveal the detail of the early Bronze Age carvings.

When I first was shown PTM in 2008, I quickly realised that we could use this technology to look at landscapes in the same way. Creating a ‘virtual PTM’ of objects and small amounts of topography from terrestrial laser scan data had already been done before but not, as far as I was aware, on a much larger scale. Would the technique scale up to an entire landscape?

Creating Virtual Polynomial Texture Maps

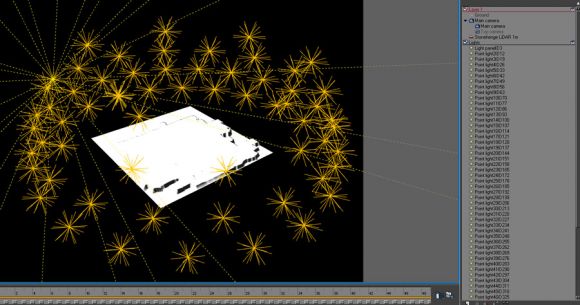

The images needed to create PTMs of smaller, real world objects are often captured using a hemispherical device called an illumination dome. This supports an array of photographic lamps. For my virtual PTM I would need a virtual illumination dome. Using 3D software, I constructed a regular dome of evenly spaced lights.

Given our previous work with 3D data of Stonehenge, and the surrounding World Heritage Site, it seemed like a good case study to continue with so Wessex Archaeology funded the development of the idea. Geoprocessing software was used to process the LiDAR tiles (kindly provided by the Environment Agency) into a very large Digital Elevation Model (DEM). This was ‘surfaced’ in our 3D software, turning millions of measurement points into an object on screen that looks solid.

In my virtual environment, a camera was placed directly above the 3D landscape, at the top of the ‘dome’ facing down from a position that would, if in reality, be several kilometers in the air. After setting the ‘environment’ - that is how the lights affect objects and cast shadows, a digital image called a ‘render’ of the landscape was made for each lighting position. The result was a sequence of 62 images of the landscape, each illuminated from a different direction.

Below is a sample PTM of the land surrounding Stonehenge. It may take a minute to download on a fast connection. Click and drag your mouse around the image (which requires Java to be enabled on your computer) to move the light position. See how the Cursus and Avenue as well as field systems and barrows appear and disappear from view.

The processing power to produce a full 1:1 representation of the LiDAR data for the whole Stonehenge World Heritage Site is considerable and very time-consuming, but something which we hope to tackle. We will also publish more PTMs of other parts of the Stonehenge WHS and other LiDAR datasets as time allows, and post to this blog when we do so.

Wessex Archaeology now use this technique on our projects where appropriate. It is particularly well-suited for locating subtle surface features, and investigating anomalies identified using geophysics.

Find out more about Wessex Archaeology's Geomatics services, and other examples of archaeology and LiDAR.